5 Types of Checks Every Shopify Store Should Have

February 5, 2025

Learn how to detect Shopify store slow page load times, issues with the checkout process, and outages have a significant impact on sales.

Monitor your Cloud Services with Cloud Status

Trusted by thousands to monitor your websites & APIs for complete visibility, speed & reliability from anywhere.

Trusted for years to

stay up

Trusted for years to

stay up Flexible & Fair

Pricing

Flexible & Fair

Pricing Real Time & Reliable

Alerting

Real Time & Reliable

Alerting Monitor

Internal & External Websites

Monitor

Internal & External Websites Quick

Identification & Resolution of Outages

Quick

Identification & Resolution of Outages

14-day free trial

No credit card required

24/7/365 real-time support

Resilient & secure platform

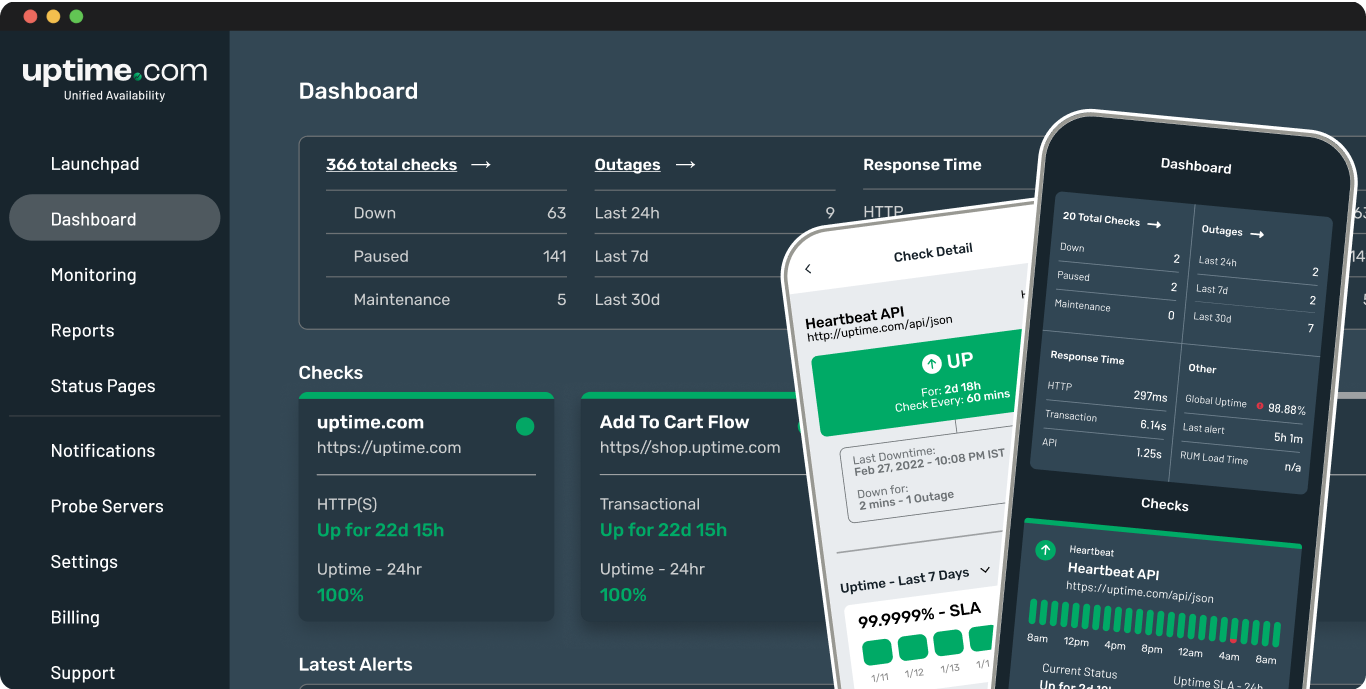

Simple & intuitive industry leading Enterprise-grade features delivered at a fair price, that are continuously improving.

The all-in-one incident management platform to communicate updates to private and public audience.

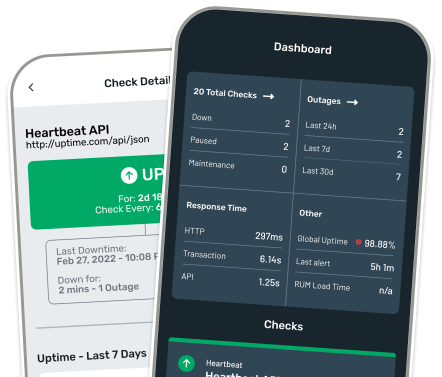

Create a Status PageEffortlessly monitor APIs in real-time with our robust API monitoring solution. Ensure uptime, detect issues, and optimize performance.

API ChecksGain insights, detect bottlenecks, and boost website performance to enhance user experience for your business.

RUM ChecksConfigure sophisticated uptime monitoring checks without sacrificing simplicity. Detect downtime, optimize speed, and ensure a seamless user experience.

Website ChecksGet real-time data and statuses on private monitoring tests behind the firewall or anywhere your infrastructure is deployed. Detect anomalies impacting internal systems. Precise, confidential, and always in your control.

Private Location ChecksContinuously test the availability, performance & functionality for all of your website's critical flows with real browser synthetics. Detect failures, boost website reliability by adding new checks within minutes.

Synthetic ChecksDon't just take our word for it. See how we compare in functionality & user feedback. Honest & Fair Pricing that scales with your business.

20+ integrations

for all plans

Synthetic & API monitors

for all plans

Unlimited user accounts

for Premium Plans

Global Monitoring

Network Proxy Free

20+ Integrations

in ALL

plans.

Unlimited Integrations.

Synthetic & API monitors

for

ALL plans.

Add-On's Available in ALL

Plans.

Unlimited user accounts in

Premium Plans.

SSO Available in

ALL Plans.

80+

Global Monitoring

Points of Presence.

Dedicated Monitoring Locations.

SSL/Certifificate

TCP Port

NTP

Domain Blacklist

SSH

UDP

Malware/Virus

SMTP

IMAP

Whois/Domain

DNS

POP

HTTPS

Ping

API Monitoring

Transaction Monitoring

Micro-Transaction Checks

Page Speed

Extended Transaction Checks

Webhook Monitoring

Group Checks

Heartbeat Monitoring

Cloud Status

Keep users & customers updated when an incident is resolved. Include a summary of the issue , impact to your business and the steps taken to resolve it.

Provide real-time, up-to-the-minute status of incident updates, including any performance issues, scheduled maintenance and service disruptions.

Monitor response time and availability from locations around the world from public locations and private locations to enable complete visibility.

Notify users about the date, time and expected impact of a system or service maintenance. Allow users to subscribe to maintenance notifications.

Monitor services anywhere they are running. Deploy our Private Location monitor to enable visibility in Private Cloud or leverage our extensive Public Cloud Locations for complete visibility.

Leverage the most reliable geographically distributed observability network to ensure granular visibility to where your customers reside and ensure your services are reachable everywhere.

Configure a page speed check that run daily to ensure your sites perform well over time. Ensure your website loads fast for visitors worldwide, optimized for performance & customer experience.

Get alerts anytime, anywhere. Our Advanced Alerting engine is highly tuned to eliminate false positives. Send alerts to multiple integrations to enable rapid response and resolution.

Customizable dashboards with critical uptime performance data needed for NOC visibility, continuous insights and realtime response to alerts and failures.

Stay in control and gain full visibility across on-premise, cloud and hybrid infrastructures. Don't limit your monitoring visibility - ensure complete coverage worldwide!

View Our Global NetworkSecure - We build trust with a secure monitoring management platform, always putting your data protection first.

Reliable - Don’t let unexpected web incidents leave your customers in the dark. We strive for reliability in everything we build and operate for your peace of mind.

Considering Website Monitoring or any of our monitoring solutions but not sure where to start? Let us take care of the implementation, enabling you to focus on what matters most in your business.

Our expert team goes beyond simple setup; we provide detailed configurations, personalized training and robust support, ensuring you can fully leverage the Uptime.com platform.

14-days free trial

No credit card required

24/7/365 real-time support

Resilient & secure platform

Recommended by hundreds of verified users on:G2, SourceForge and TrustPilot

Consistently the highest Net Promoter Score of any website monitoring provider in the market

Award-winning support team available 24/7/365 to proactively resolve issues

AICPA SOC 2 Type II audit completed, confirming the highest standards of compliance

$67/mo

vs

Hosted Status Pages

$250/mo

+

Uptime Monitoring - 3 users

$120/mo

$370/mo

February 5, 2025

Learn how to detect Shopify store slow page load times, issues with the checkout process, and outages have a significant impact on sales.

February 12, 2025

Learn how to protect your business from costly API failures and which features matter when selecting an API monitoring tool or solution.

January 30, 2025

Learn why manually checking status pages is efficient and automated status page monitoring of your third party dependencies is essential.